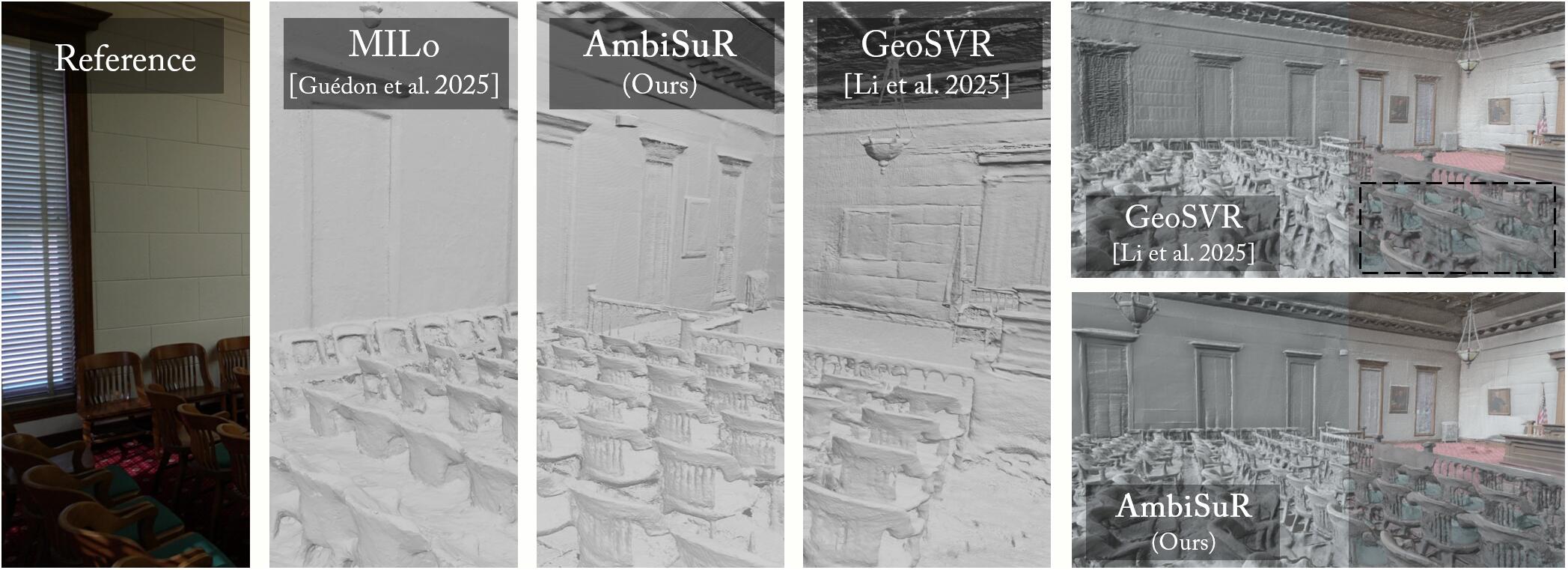

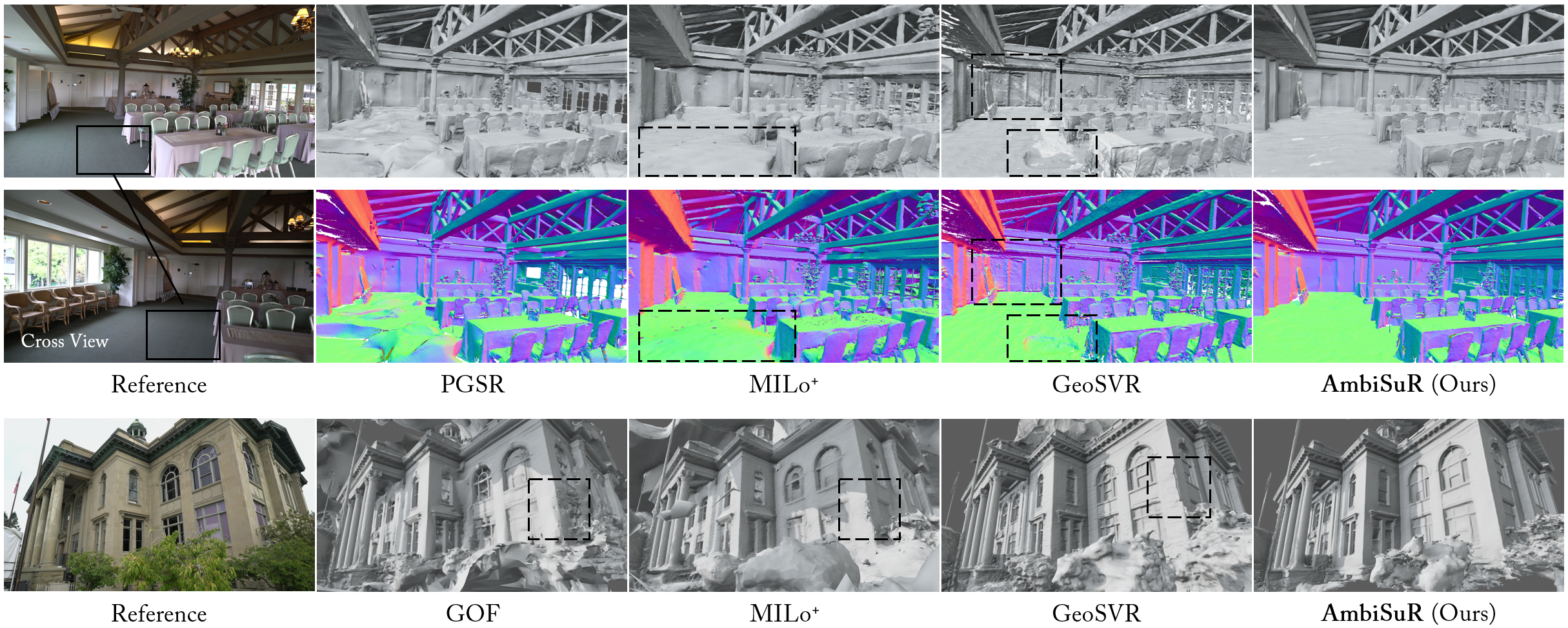

Surface reconstruction with differentiable rendering has achieved impressive performance in recent years, yet the pervasive photometric ambiguities have strictly bottlenecked existing approaches.

Revisiting the foundation, our investigation uncovers two built-in primitive-wise ambiguities in representation, while revealing an intrinsic potential for ambiguity self-indication in Gaussian Splatting. Stemming from these, a photometric disambiguation is first introduced, constraining ill-posed geometry solution for definite surface formation. Then, we propose an ambiguity indication module that unleashes the self-indication potential to identify and further guide correcting underconstrained reconstructions, with the compensation from today's advancing geometry priors.

Extensive experiments demonstrate our superior performance in surface reconstruction compared to existing methods across various challenging scenarios, while excelling in broad compatibility.

This method is mainly developed on the open-source projects Gaussian Splatting, Depth-Anything-3, and PGSR. The style of pipeline figure is referred from Perceptual-GS. Partial scripts are borrowed from VGGT and SVRaster. Thanks for their great contributions.

@inproceedings{li2026ambisur,

title={Revisiting Photometric Ambiguity for Accurate Gaussian-Splatting Surface Reconstruction},

author={Li, Jiahe and Zhang, Jiawei and Bai, Xiao and Zheng, Jin and Yu, Xiaohan and Gu, Lin and Lee, Gim Hee},

booktitle={International Conference on Machine Learning},

year={2026},

organization={PMLR}

}